The FreeBSD Diary |

|

(TM) | Providing practical examples since 1998If you buy from Amazon USA, please support us by using this link. |

|

Xplanet - improve your background

21 May 2004

|

|

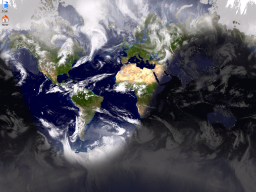

Xplanet is program which displays a picture of the Earth. It was inspired by Xearth. Xplanet can draw all the major planets and most satellites. I use it to provide the background image on my machines which run X. You can also download satellite images of cloud cover so your background reflect reality. I also display the daylight/darkness shadow. It makes for a very interesting and dynamic background. Have a look at their gallery of images. Very nice eye candy! The image below is is an example of what I have on my laptop. If you click on it, you'll see the full 1024x768 image. Also present, but from a different time of day, is what the screen shot looks like without clouds.

I am assuming you already have X up and runnning. I am also using KDE in this example. I'm sure the instructions for other window managers will be simliar. |

|

But wait! Where's the BSDCan update?

|

|

It's been just over 5 days since the Sunday BSDCan breakfast. I spent Monday doing nothing much. Tuesday involved a few emails. Wednesday I did a lot of changes to the BSDCan website to get a /2004/ archive going. That should be ready soon. This morning (Thursday) I updated the ad serving software. In the process, I found this half-completed article and thought I should update and commit it before it got lost. It's also a nice break from the accounting and receipt wrap-up of BSDCan. |

|

Installing Xplanet

|

|

Use the ports, of course. cd /usr/ports/astro/xplanets make installYou could also try pkg_add -r xplanet, but for this, I prefer adding from ports.

|

|

Configuring Xplanet

|

|

Here is how I configured Xplanet on my IBM ThinkPad T22 running FreeBSD 5.2.1:

With that, you should have an Xplanet background. |

|

But wait! There's more!

|

|

You don't have clouds yet. The Xplanet Maps & Scripts pages shows you how to do this. There are two parts:

Cloud images are updated every three hours. There is no sense in getting the maps any more often than that. Please do not abuse this service. Do not run your cronjob any more often than that. Many cloud map servers have been shut down because of people fetching more often than necessary. Get the mapI second the advice given on the Xplanet Real Time Cloud Map page. Install the script provided by Oliver White. His scripts downloads the file from a random mirror. Help to distribute the load.

Note: I had to install Tell Xplanet about the map

Xplanet has a rather extensive man page. It also has configuration files at

cloud_map=/home/dan/clouds/clouds_2048.jpg

The file referenced by the

In later versions of

[earth]

"Earth"

color={28, 82, 110}

cloud_map=/home/dan/clouds/clouds_2048.jpg

The settings not related to

|

|

creating a cronjob

|

|

I created a shell script to invoke the above mentioned perl script. This shell script ensure that we always have a valid cloud map. #!/bin/sh cd ~/clouds perl ~/clouds/download_clouds.pl if [ -s clouds.jpg ] then RESULT=`file clouds.jpg | grep -c "JPEG image data"` if [ $RESULT -eq 1 ] then mv clouds.jpg clouds_2048.jpg else rm clouds.jpg fi else rm clouds.jpg fi It invokes the perl script, then makes sure that the resulting file is non-zero in size. The script then verifies that the file is a JPEG image, as opposed to an HTML file as might be returned in a 404 error. If all is OK, it moves the fetched over over the stored file and finishes. The cronjob I use to invoke the shell script is: 15 */3 * * * dan /home/dan/bin/clouds.sh This will run at 15 past the hour, every three hours. However, why run it that often? For example, if you're doing this on your office system, why not restrict updates to something that resembles your working hours? Something more like this: 15 7-18/3 * * 1-5 /home/dlangille/bin/clouds.sh > /dev/null Details:

Why restrict? To reduce the bandwidth consumed. This helps out both yourself (or your office) and the provider of the images. Every bit helps. Once it has run sucessfully, you can get rid of the output that is mailed to you by adding the following to the end of the line: 2>&1; > /dev/null That should be everything you need to have a much more interesting background. Enjoy. |